Infrastructure Design for Autonomous Vehicle Development

Santosh Rao

In my last blog, I talked about ways to leverage data engineering and data science technologies to solve autonomous vehicle (AV) challenges, including approaches for gathering data from test and survey vehicles, HD mapping, training DNNs, and simulation.

There are a variety of possible entry points for companies initiating or expanding an autonomous vehicle program. In this blog, I’ll explore the pros and cons of four common design patterns:

- Extension of big data analytics

- Extension of high-performance computing (HPC)

- Cloud-based AV development

- Greenfield AV deployment

Design Pattern 1: AV Development as an Extension of Big Data

One common design pattern is the extension of an existing big data analytics environment—usually built on Hadoop and/or Spark—to support autonomous vehicle development. Since many players in the automotive marketplace already have analytics systems in place, perhaps in conjunction with existing advanced driver assistance systems (ADAS) programs, this makes a certain amount of sense.

Limitations of the big data approach

As I discussed in the white paper Designing and Building a Data Pipeline for AI Workflows, the Hadoop file system (HDFS) common in big data environments is not optimized to deliver the performance required for training deep neural networks (DNNs). HDFS has had limited exposure to diverse data workloads with varying performance characteristics. Big data vendors have undertaken significant (and proprietary) re-writes to deal with performance needs in the transition from MapReduce to Spark; AI introduces another wrinkle in the HDFS story.

In addition, HDFS typically maintains three copies of each data object, increasing capacity consumption. If you adapt your analytics environment to support an AV program, performance, capacity, and the cost to scale to many petabytes of data will likely become issues.

Of even greater concern, vendors in the Hadoop/Spark ecosystem appear to be facing significant headwinds. The future of previous high flyers are now in doubt. Unless you’re willing and able to switch to an open-source model in the future—you might think twice before relying on one of these vendors to support AV development programs that are likely to persist for many years.

NetApp big data solutions

NetApp offers a variety of big data solutions that accelerate your environment while reducing the capacity required and streamlining data protection. For example, the NetApp solution for in-place analytics eliminates data copies that create bottlenecks in your AI pipeline. NetApp customers have benefited from in-place data access to accelerate all types of analytics for years.

Design Pattern 2: AV Development as an Extension of HPC

Another common entry point for AV development is the expansion of an existing HPC environment. HPC has long been used in the automotive industry to support a variety of design and simulation use cases. As a result, the HPC model is a common design pattern for autonomous vehicle development. A typical HPC environment includes GPU-equipped servers, often in conjunction with a parallel file system such as Lustre or GPFS.

Limitations of the HPC approach

Doing your AV development in a familiar environment—that’s already in place—can make a lot of sense for teams just starting out. However, parallel file systems such as Lustre and GPFS are optimized for batch processing and may not deal well with workloads consisting of tens or hundreds of thousands of small files. Metadata access with these filesystems can become a bottleneck due to reliance on separate metadata servers. As your AV environment ingests data from more and more test cars, I/O scaling becomes a challenge, impeding throughput.

Researchers at leading US Supercomputing centers are learning this lesson as they adapt their environments for deep learning in addition to simulation, “Whether GPFS, Lustre, or any of the other file systems for HPC sites, there is no keeping up with the raw bandwidth required, at least not for [deep learning] deployments at scale.”

NetApp HPC solutions

As with big data, NetApp offers a variety of solutions tailored to HPC. NetApp HPC solutions are fast, reliable, scalable, and easy to deploy. NetApp can help you accelerate your existing parallel file system deployment, while NetApp StorageGRID technology delivers reliable object-based storage for archival or other needs.

Design-Pattern 3: Cloud-Based AV Development

Some companies developing AV programs may have done much of their AI work to date in the cloud, perhaps in partnership with one of the big cloud vendors. This approach combines the nearly infinite capacity of cloud object stores with the ability to utilize the latest GPUs—or other specialized hardware—on a pay as you go basis without big upfront investments in infrastructure.

Limitations of a cloud-based approach

The potential limitations of the cloud-based approach probably won’t come as a big surprise: performance and cost. Cloud object storage is generally not designed to deliver the level of performance that AV programs will require. Object stores were originally intended for cloud archiving rather than performance, but in many cases, they have become the de facto data store for cloud projects. For AV training needs in particular, cloud object stores leave a lot to be desired. In addition, storage capacity is going to be expensive, and you may want to think about data egress costs should you need to get your data out in the future.

NetApp Cloud solutions

With its proven data fabric technologies, NetApp is working to enable seamless cloud and hybrid operations at scale. NetApp Cloud Data Services include both in-the-cloud and near-the-cloud options to address diverse data management needs and accelerate results. NetApp Kubernetes Service (NKS) makes it fast and convenient to deploy container environments in the cloud and on-premises and works in conjunction with the NVIDIA GPU Cloud (NGC).

NetApp NFS for Deep Learning

You’ve probably noted that many of the challenges outlined in the three design patterns above come down to I/O performance. While NetApp offers solutions to accelerate your existing HDFS, parallel file system, and cloud object storage deployments, we believe in many cases that NFS is the better solution.

NFS has been applied to a diverse range of workloads ranging from HPC to databases such as Oracle, SQL Server, and SAP to virtualization to big data. This long history of using NFS across a variety of workloads enables it to handle both random and sequential I/O, especially when combined with the benefits of all-flash storage in a scale-out cluster. NetApp FAS and NetApp All-Flash FAS (AFF) systems address your AV development needs in terms of both capacity and performance. For archiving cold data, NetApp FabricPool technology migrates data to and from object storage automatically based on your policies.

Design Pattern 4: Greenfield Deployment

All serious players in autonomous vehicle development are basically in a race to be the first to introduce viable technology—either for general purpose self-driving or to fill a niche such as ride-sharing, trucking, or autonomous delivery. As a result, no matter where they start, most tend to end up in the same place—a greenfield deployment designed to deliver the scalable compute and storage systems necessary to deliver a sustainable advantage. When you’re actively training and testing dozens or even hundreds of deep learning models, every minute saved in processing time is significant.

This means deploying fast, scalable GPU servers along with the fastest data storage and data pipeline technologies.

Reference Architecture for Autonomous Vehicle Development

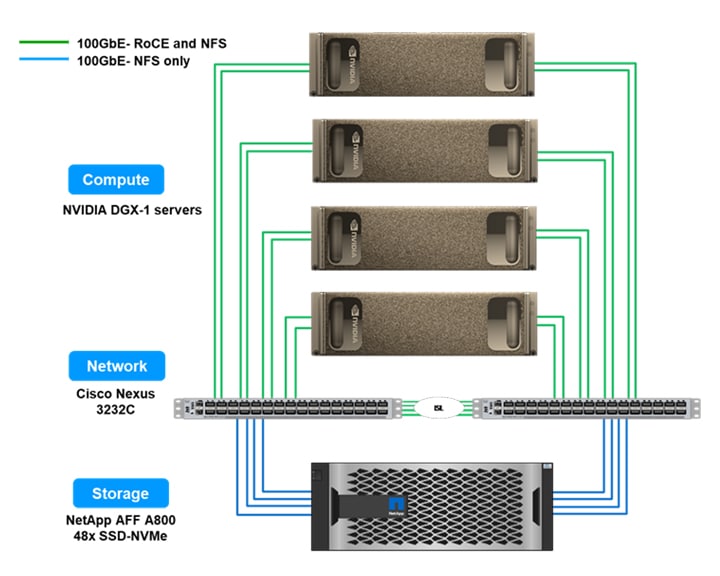

NetApp and NVIDIA are collaborating to create a reference architecture for companies engaged in autonomous vehicle development. It offers directional guidance for building an AI infrastructure using ONTAP AI systems incorporating NVIDIA DGX systems and NetApp AFF storage.

Be sure to download the NetApp technical report at the link below to see the first results and recommendations:

Meet our Automotive AI Experts at these events

NetApp will be at the following industry events this week:

- ADAS & Autonomous Vehicles, September 24-25, Baronette Renaissance, Detroit-Novi, USA

- Auto-AI Europe, September 26-27, Maritim proArte Hotel, Berlin, Germany

More Information and Resources

NetApp ONTAP AI and NetApp data fabric technologies and services can jumpstart your company on the path to success. Check out these resources to learn about ONTAP AI.

- Solution Brief: NetApp AI Solutions for Automotive

- White Paper: Edge to Core to Cloud Architecture for AI

- White Paper: Building a Data Pipeline for Deep Learning

- NetApp Validated Architecture: NetApp ONTAP AI Powered by NVIDIA

- Customer Story: Cambridge Consultants Breaks Artificial Intelligence Limits

Previous blogs in this series about automotive AI use cases:

Santosh Rao

Santosh Rao is a Senior Technical Director and leads the AI & Data Engineering Full Stack Platform at NetApp. In this role, he is responsible for the technology architecture, execution and overall NetApp AI business.

Santosh previously led the Data ONTAP technology innovation agenda for workloads and solutions ranging from NoSQL, big data, virtualization, enterprise apps and other 2nd and 3rd platform workloads. He has held a number of roles within NetApp and led the original ground up development of clustered ONTAP SAN for NetApp as well as a number of follow-on ONTAP SAN products for data migration, mobility, protection, virtualization, SLO management, app integration and all-flash SAN.

Prior to joining NetApp, Santosh was a Master Technologist for HP and led the development of a number of storage and operating system technologies for HP, including development of their early generation products for a variety of storage and OS technologies.

.png?width=117&format=pjpg&disable=upscale)