NetApp HCI Inference at the Edge: Completing the AI Deep Learning Pipeline

Charles Hayes

“This is not the singularity you are looking for.” – The Data Buddha

“This is not the singularity you are looking for.” – The Data Buddha

No need to worry. AI hasn’t decided (yet) to rise up and enslave us all. In fact, for now at least, it seems more like AI is here “to serve man,” and we aren’t talking about a cookbook.

Enter AI inference servers. They aren’t new. Inference helps most of us with daily tasks that we probably don’t even think of as artificial intelligence. Google speech recognition, image search, and spam filtering applications are all examples of AI inference workloads. Today, inference systems and general recommender systems, cited by NVIDIA as the most common edge workload, extend well beyond these simple tools into more complex applications like medical diagnostics, manufacturing, agriculture, analytics, media and entertainment, and more.

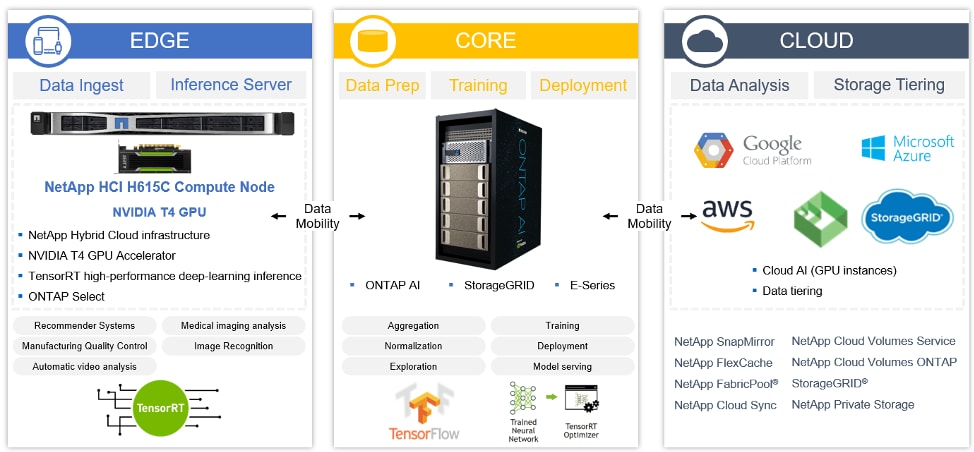

NetApp® technology, in combination with the NVIDIA TensorRT Inference Server, provides an integrated edge, core, cloud solution for these use cases with common AI inference workloads such as:

- General recommender systems

- Medical imaging analysis

- Manufacturing quality control

- Automatic video analysis

- Retail and kiosk image recognition

Pitfalls of the AI Deep Learning Pipeline (and Why Your Data Scientists Might Be Mad at You)

Many data scientists are finding that their job is getting harder to do. Not because they don’t have enough data or good data. The problem is that there is simply too much data to manage without having expertise in storage systems, compute platforms, and general IT management. I’m pretty sure that most data scientists and researchers aren’t very interested in things like IT infrastructure, compute and storage scaling, unplanned downtime, rebuilds, and so on.Who wants to spend their time fixing their tools instead of productively using them to gain insights and improve business outcomes?

Imagine the frustration data scientists, researchers, and the IT operations teams who support them face when they have to deal with some of the common pitfalls in the areas where the AI deep learning pipeline can break down. This is where productivity slows to a crawl.

- Constrained, slow, or manual access to data and trained models

- Technical complexity of infrastructure and tools

- Data in disparate silos

With the NetApp simplified AI pipeline infrastructure, you get full data mobility throughout the pipeline, from the edge to the core to the cloud. With this data fabric approach you can:

- Continuously improve data preparation, training, and outcomes

- Optimize access to premium GPU resources, whether they’re on your premises or in the cloud.

- Seamlessly scale performance and capacity as your AI projects grow

- Enable your data scientists to run more AI projects, faster

NetApp HCI: A Simple, Flexible Solution for AI at the Edge

NetApp provides full data mobility across the deep learning pipeline from edge to core to cloud, enabling continuous improvement of data preparation, training, and outcomes.With this hybrid cloud infrastructure, NetApp HCI acts as a powerful AI inference server, with ONTAP® AI creating the trained model. After you train a model, you can deploy it to perform inference workloads. With NetApp FlexCache® caching, your data scientists can access the trained model without exporting the full model, improving performance and ease of use. When the inference model is deployed, results can be fed back into the training model to improve deep learning. Your applications deliver higher performance by using TensorRT Inference Server on NVIDIA GPUs.

Streamline the flow of data reliably and speed up training and inference when your data fabric spans from edge to core to cloud.

This is your data in your data fabric, securely stored and easily accessible, to train your models and inform your applications.

This is your data in your data fabric, securely stored and easily accessible, to train your models and inform your applications.

Click here to learn more about how you can build a data fabric to accelerate your journey to the cloud

Charles Hayes

Charles Hayes is a Product Marketing Manager focusing on hybrid cloud solutions. He’s a 20-year veteran of the storage industry, joining NetApp in September 2019. Before NetApp he spent years defining, developing, and marketing products and solutions for SimpleTech, Iomega, EMC and Lenovo. Charles is also a mediocre percussionist/guitarist, an old-school punk rock fan, and frequently claims he saw all the cool bands before they were cool.