NetApp HPC solutions

AI performance on high

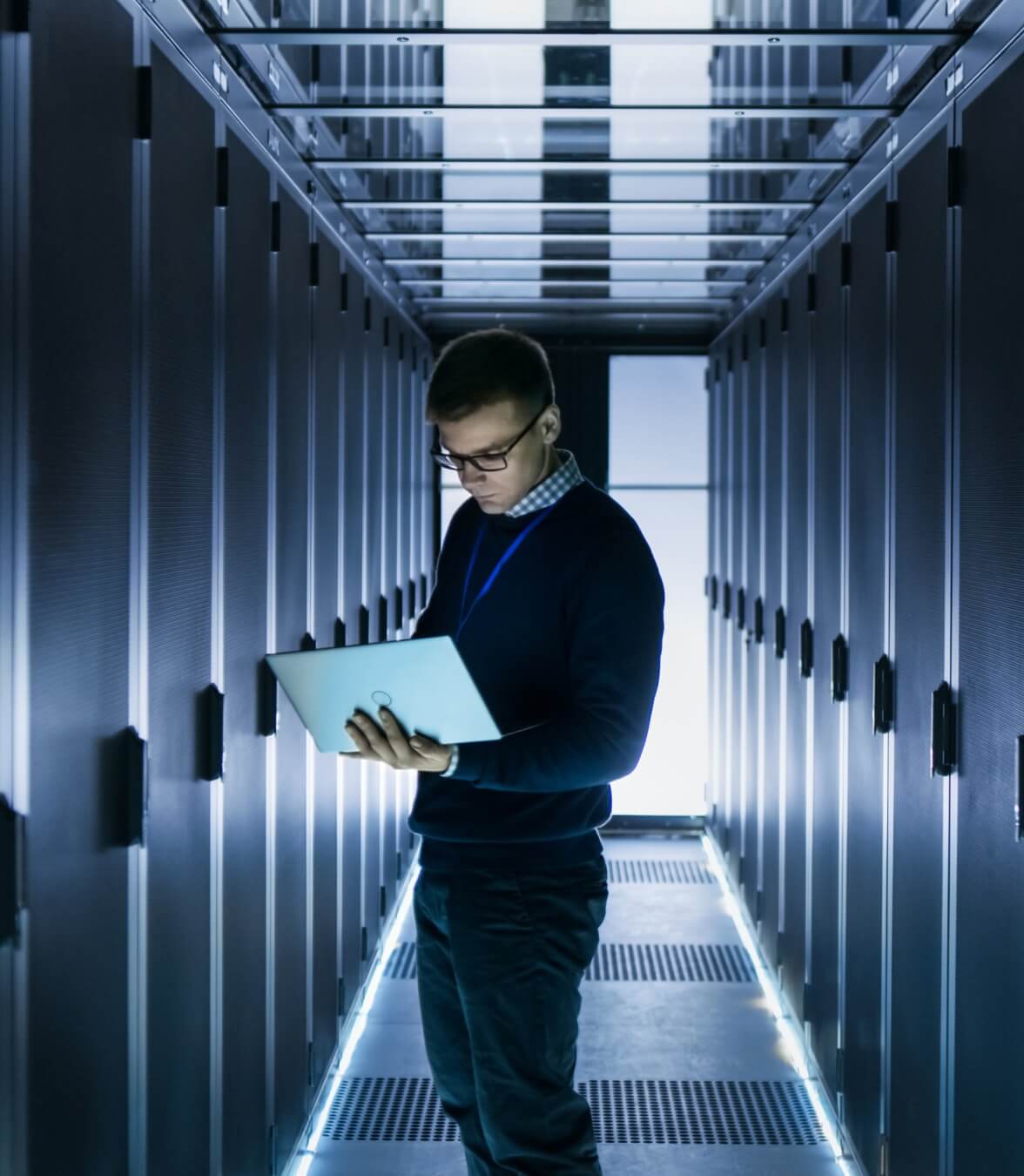

If you don’t think we’re living in exciting times, consider how high-performance computing (HPC) is pushing the boundaries of AI. From Large Language Model training to mission critical data processing, NetApp® HPC solutions continue to lead the way.

NVIDIA DGX SuperPOD with NetApp E-Series: NetApp’s answer to high-performance computing

Even the fastest supercomputer can’t meet expectations if it doesn’t have equally fast storage to support it. Good news: NetApp EF600 all-flash NVMe storage combined with the BeeFGS parallel file system is certified for NVIDIA DGX SuperPOD. Now it's possible to deploy HPC solutions that meet the extreme demands of AI workloads from edge to core to cloud.

NVIDIA DGX SuperPOD with NetApp ONTAP Storage: Intelligent Data Infrastructure for centralized AI

AI Centers of Excellence are central hubs for enterprise AI development. With increasing demands from agentic AI and model training, the NVIDIA DGX SuperPOD with NetApp ONTAP Storage combines DGX computing power and NetApp AFF A90 storage to enable ML, AI, and HPC workflows in one high-performance solution.

Key benefits

To process, store, and analyze massive amounts of data, your operations demand the lightning-fast, highly reliable IT infrastructure that NetApp HPC solutions deliver.

Accelerate time to insight

Eliminate design complexity and guesswork with our certified solution. Simplify deployment with full integration into NVIDIA Base Command Manager.

Scale seamlessly as you grow

Quickly respond to changing workload demands and exponential data growth with a building-block architecture that scales performance and capacity as needed.

All the reliability you need

Our fault-tolerant design delivers greater than 99.9999% availability—proven by 1 million NetApp E-Series and EF-Series installations.

Get low TCO

Datasets growing exponentially? Costs spiraling out of control?

Our ultra-high-density architecture delivers the power, cooling, and support savings you need to succeed.

Aruba Enterprise

Web hosting services demand availability and performance. It’s a lot of data to manage. NetApp AFF storage hardware and ONTAP management make data center management easier.

CGG

Fueling resource discovery with on-demand geoscience processing.

Key partnerships

From best-in-class compute systems to enterprise-grade parallel file systems, these are the NetApp partners that will help take your HPC to the next level.

.png?width=117&format=pjpg&disable=upscale)