Automating MLOps, DevOps, and DataOps for Data Scientists and ML Teams

Mike McNamara

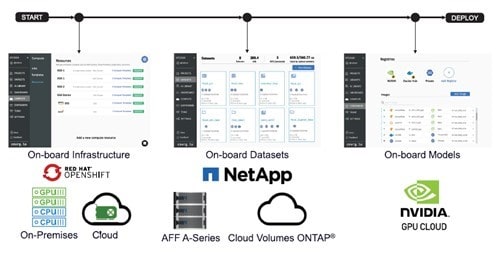

NetApp and cnvrg.io have partnered to deliver an AI/ML data science pipeline solution that is streamlined and drives productivity and efficiency. The solution incorporates industry-leading Kubernetes managed clusters (for example, Red Hat OpenShift), cached datasets for extreme performance, and the one-click attachments of models to datasets with NVIDIA NGC integration. NetApp® ONTAP® AI provides high-performance compute and storage for any scale of operation, and cnvrg.io software streamlines data science workflows, improving resource utilization.

NetApp and cnvrg.io have written a detailed technical paper, Hybrid-cloud AI Operating System with Data Caching, which presents an innovative solution that enables IT professionals and data engineers to create a truly hybrid-cloud AI platform with a topology-aware data hub. Data scientists can instantly and automatically create a cache of their datasets in proximity to the compute, wherever the compute is located. As a result, high-performance model training can be easily accomplished and multiple AI practitioners can collaborate with immediate access to the cached datasets and versions, and with the ability to create a dataset-version hub.

To learn more, read the technical report. To experiment with cnvrg.io, download the free community version.

Mike McNamara

Mike McNamara is a senior product and solution marketing leader at NetApp with over 25 years of data management and cloud storage marketing experience. Before joining NetApp over ten years ago, Mike worked at Adaptec, Dell EMC, and HPE. Mike was a key team leader driving the launch of a first-party cloud storage offering and the industry’s first cloud-connected AI/ML solution (NetApp), unified scale-out and hybrid cloud storage system and software (NetApp), iSCSI and SAS storage system and software (Adaptec), and Fibre Channel storage system (EMC CLARiiON).

In addition to his past role as marketing chairperson for the Fibre Channel Industry Association, he is a member of the Ethernet Technology Summit Conference Advisory Board, a member of the Ethernet Alliance, a regular contributor to industry journals, and a frequent event speaker. Mike also published a book through FriesenPress titled "Scale-Out Storage - The Next Frontier in Enterprise Data Management" and was listed as a top 50 B2B product marketer to watch by Kapos.

.png?width=117&format=pjpg&disable=upscale)