Unlocking AI training in the cloud

Share this page

Mike Oglesby

To train AI models in the public cloud, you need access to meaningful data. Typically, that data is distributed throughout the organization and often resides on NAS volumes. The data’s location presents a challenge because many popular AI tools and workflows are designed around object storage APIs. Object storage is also the most familiar form for most data scientists working in the public cloud.

Dataset size and movement are also a consideration. The extremely large models that research organizations use are trained on massive datasets, but most enterprise data scientists train their models by using representative subsets. Because of its simplicity and ease of access, object storage is a good fit for those smaller datasets. Unfortunately, moving data from enterprise file storage platforms to public cloud object storage platforms is complicated and introduces concerns around governance and data lineage. Oftentimes, those hurdles mean that data never actually makes its way to the public cloud, and data scientists can’t deliver to their full potential.

At NetApp, our mission is to tear down those roadblocks.

NetApp technology clears the path for easy, secure access

Starting in NetApp® ONTAP® 9.12.1, files that reside on NAS volumes can be accessed through the industry-standard Simple Storage Service (S3) object storage API. So, you can now access data in your NAS systems without altering your S3-API-based workflows.

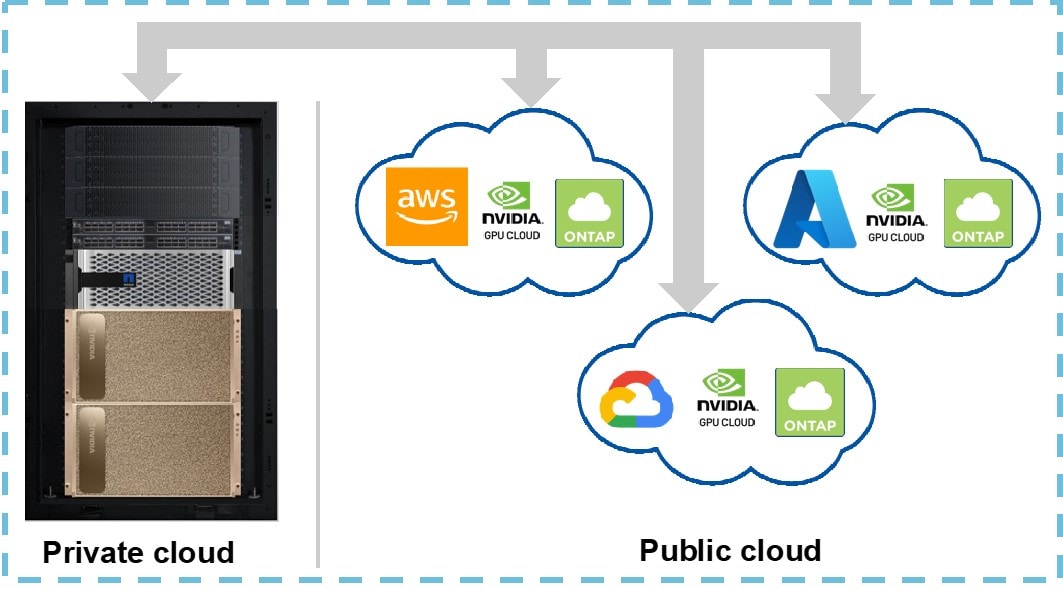

Better yet, if network speeds between your on-premises and public cloud environments are sufficient, you don’t even need to move the data to a public cloud storage platform. If your network speeds are a constraint, however, then you can use NetApp’s industry-leading data fabric technologies to quickly and efficiently move your data to where it needs to be.

For example, you can use the NetApp SnapMirror® feature of ONTAP to continuously and efficiently replicate data to a Cloud Volumes ONTAP instance in the public cloud, bringing the data closer to your end users. Just as with on-premises ONTAP, you can access data in Cloud Volumes ONTAP through the S3 API. And to easily manage the replication relationship that brings the data to Cloud Volumes ONTAP, you can use the NetApp BlueXP™ unified control plane. BlueXP is a web-based console that enables you to manage all your NetApp storage systems and services across your hybrid cloud environment.

Gain more insight with familiar tools

This new S3 functionality enables many use cases. For example, a data scientist working in a notebook on the Amazon SageMaker platform can access ONTAP NAS data by using the popular Boto3 library. The same applies to a data scientist working in a Jupyter Notebook on either Google Cloud or Azure. And automated data pipeline workflows and tools that support the S3 API can also directly access ONTAP NAS data.

With all that accessibility, you can continue to use most, if not all, of the tools and workflows that you’re already familiar with. The only difference is that you now have access to a lot more meaningful data!

Another benefit of the new S3 functionality that’s built into ONTAP is that you can better focus on your core tasks as you work in the public cloud. You can build and test machine learning models without having to spend time and effort on learning and managing a new storage system—which means faster time to insight.

Enhance your AI training in the cloud today

With NetApp, you can unlock the potential of the cloud by enabling easy access to meaningful data that drives AI training. To learn more, visit the NetApp AI landing page.

.png?width=117&format=pjpg&disable=upscale)