The right data, on the right cloud, at the right time

Share this page

Laurence James

The right data, on the right cloud, at the right time

Consciously or subconsciously we all tier the objects that are dear to us. I have the objects I value the most in the drawer next to my bed, notably the engraved pen set that my mother gave me on the day I started my first job many decades ago. Then there are all the other drawers and cupboards in the house that contain objects of importance but have less value today. These are, of course, much more difficult to locate and retrieve. Please don’t make me go into the loft, or the garage. These contain what can only be described as the permafrost of stored objects. They are difficult or impossible to access and take the longest time to retrieve.

Okay, so we’ve established that I need a life laundry — in other words a declutter — and it’s the same for your data. All those decades ago, when I started my working life as an analyst programmer, my company ingested environmental data from sensors all over the globe on a continual basis. At the time that the raw data was ingested it was of extremely high importance and value. It formed the starting point for numerical modeling. By the next day, the value of that raw data had diminished. In an environment where the value and importance of data is continually changing, the ability to automatically manage the location and access to data as its “temperature” changes from hot to cool to cold to frozen was highly desirable.

And so I was tasked with implementing a hierarchical storage management (HSM) system. Back then, HSM followed a set of business policies that governed which data was managed, the aging criteria, and the storage targets. At that time the challenges that governed the aggressiveness of the HSM policy were determined by how much tier 1 storage we could afford, along with our data ingestion capacities. Those numbers gave us a number for the free capacity we needed to run the models. Back then, tier 1 storage capacities were measured in MB, not GB — and certainly not in TB! Tier 2 storage was tape!

The policies that governed the timing of migration of data between tiers and the HSM software ensured that the data remained referentially accessible to the application, regardless of where the data resided in the hierarchy.

Fast forward a couple of decades. By now I was working for the company that defined the term information lifecycle management (ILM), and I became the ILM business manager. The focus for ILM was to help customers solve pressing business issues, such as the flood of data and information and its changing value over time. From my aging notes, here are a couple of the mantras from the time (2003).

“There is no good reason to store less valuable, aging information on the same storage device as more valuable, new information.”

The one that always sticks:

“The right data, on the right device, at the right time in its lifecycle, at the right price, at the right service level.”

Fast forward to the present, and I hope you’re seeing the thread. Today we call this tiering, although I’m continually confused by references to data tiering, storage tiering, cloud tiering! Let’s keep it simple and call it data tiering, because it’s your data and your operation. Organizations today ingest, create, and generate vastly more data than they ever did in the past, and there is now a wide choice of storage targets for tiered data. Targets include the plethora of storage offerings from cloud service providers (CSPs), but the fundamental premise remains the same. So let me modify one word and modernize the second mantra from 2003.

"The right data, on the right cloud, at the right time in its lifecycle, at the right price, at the right service level.”

The requirement for data tiering is as strong today, if not stronger, than it has ever been. Many organizations are forming their strategies for intelligent data management. They aim to protect their investment in tier 1 performance storage, such as AFF or FAS, from the impact of capacity shortages and loss of performance by managing the residency of inactive, cold data. This active management maintains service delivery and customer satisfaction.

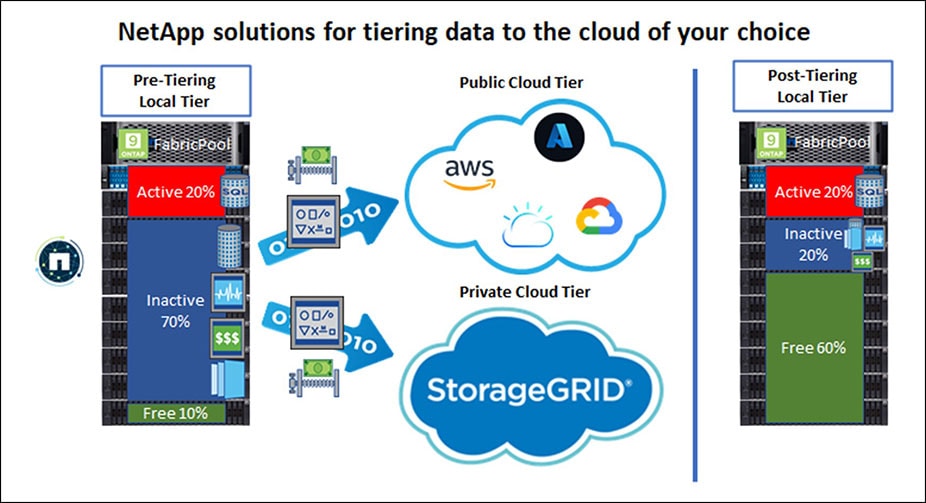

FabricPool is the data tiering function in NetApp® ONTAP® that gives you access to tiering policies targets and automation. Depending on your corporate governance, compliance, and regulation requirements, you may be required to tier some data to the private cloud only. NetApp StorageGRID® object storage is a good choice for a robust, cost-efficient target for locally tiered data. You might also have data that is free to be tiered to the public cloud. In that case, you can choose a CSP and tier your data to a range of CSP storage tiers, depending on your requirements. Here are some example cloud tier targets:

- Amazon S3 (Standard, Standard-IA, One Zone-IA, Intelligent-Tiering)

- Amazon Commercial Cloud Services (C2S)

- Google Cloud Storage (Multi-Regional, Regional, Nearline, Coldline, Archive)

- IBM Cloud Object Storage (Standard, Vault, Cold Vault, Flex)

- Microsoft Azure Blob Storage (Hot and Cool)

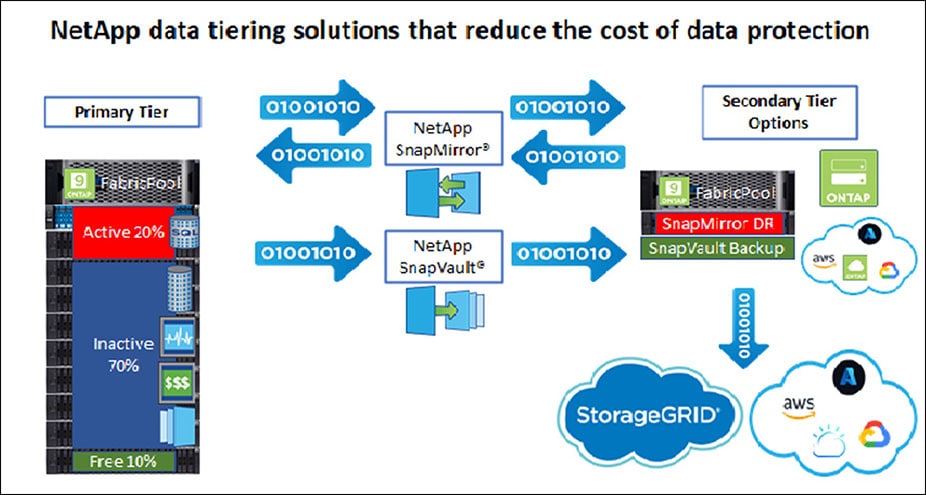

NetApp data tiering also delivers additional cost benefits for customers who are challenged by the growth of secondary data protection storage. In this scenario, FabricPool can be deployed to tier secondary cold NetApp SnapMirror® or SnapVault® backups to lower-cost storage, such as local tier StorageGRID or the public cloud.

In either case, the benefits of deduplication, compression, compaction, and encryption are realized, reducing the required secondary storage capacity and transport costs while maintaining the efficiencies and security of your data assets throughout their lifecycle.

A good example of data tiering using NetApp technology is the Laboratory for Atmospheric and Space Physics (LASP), in Boulder, Colorado. Astronomy produces the largest, most complex, and most diverse datasets in the world. Managing this volume of data required FabricPool and StorageGRID to auto-tier huge amounts of data and return a 50% saving in costs. Read the full case study: LASP Data Tiering.

As you can see, data tiering has a long history that goes back many decades.

For me, the following things differentiate the NetApp data tiering offering from the competition:

- You have a choice of local or remote secondary storage targets.

- Delaying tier 1 storage upgrades delivers a tangible cost benefit.

- Policy-based, intelligent, zero-touch automation frees IT staff to focus on service delivery.

- Removes the risk of “out-of-space” conditions that affect customer-facing applications.

- Data efficiencies are maintained throughout the tiering process, reducing costs.

If you would like to find out more about NetApp data tiering technologies, here are my recommendations for further reading:

Intelligent data growth management

Inactive Data Tiering to Amazon AWS S3 with FabricPool on FlexPod

.png?width=117&format=pjpg&disable=upscale)