Rapid and Reliable Code Delivery with DevOps as a Service (DaaS)

Sushma Sattigeri

As organizations change their development models, the tools that support these organizations must transform. At NetApp, we’ve seen a change in the development landscape, from monoliths to microservices. To help evolving teams achieve rapid, frequent, reliable code delivery, and to enhance engineering productivity, we are offering DevOps as a Service (DaaS). This solution provides automated installation, configuration, and deployment of services such as Jenkins, source control management, and the plugins needed for the workflows. DaaS enables teams from the time code is written to the delivery, with best practices, significantly reducing their cycle time.

As organizations change their development models, the tools that support these organizations must transform. At NetApp, we’ve seen a change in the development landscape, from monoliths to microservices. To help evolving teams achieve rapid, frequent, reliable code delivery, and to enhance engineering productivity, we are offering DevOps as a Service (DaaS). This solution provides automated installation, configuration, and deployment of services such as Jenkins, source control management, and the plugins needed for the workflows. DaaS enables teams from the time code is written to the delivery, with best practices, significantly reducing their cycle time.

Features and Value of DaaS

Based on Kubernetes, the DaaS solution offers centralized management of the entire software development tools ecosystem such as Jenkins, source control management (SCM), artifact storage, and monitoring. Helm and Docker Engine are used to run the container and deploy the solution. Trident manages our persistent volumes, so any storage—NetApp ONTAP®, Element® software, Cloud Volumes Service, or Cloud Volumes ONTAP—can be used. A set of internal services are developed using Flask, and CouchDB is used to store information on pipelines. Bitbucket, Perforce, or GitHub can be used as SCM, and the solution can be on the premises or in the cloud.The key value of DaaS is the automated deployment of Jenkins. Along with deployment of the Jenkins image, the following are taken care of automatically, without any user intervention:

- Customizing the Jenkins image

- Installing additional plugins

- Enabling the Kubernetes plugin

- Connecting to SCM to detect new code commits

- Starting new jobs as soon as pipelines are created

Automated Installation Using Helm

This step, run by a Kubernetes administrator, installs base services using Helm. Once the base services are up and running, the web user interface can be used to deploy the solution.Creation of base Helm charts in a namespace

User Interface to Deploy the Solution

This step enables a solutions owner or an admin to configure and deploy a containerized Jenkins instance and integrates it with the selected SCM, along with deployment and configuration of all the necessary plugins. What used to take an admin several hours is now completely automated and can be accomplished in just minutes with a single click. Deploying the solution using Helm charts (Infrastructure as code) makes this procedure programmable and repeatable, saving developers a lot of time. With everything in Helm and source controlled, additional modifications to the project can be saved, changes can be reverted, and information can be stored safely before upgrades.Web UI to select and install the required services

Once the required services are installed, Jenkins is installed and deployed along with all the configurations from the Helm charts. No manual intervention is required.

Once the required services are installed, Jenkins is installed and deployed along with all the configurations from the Helm charts. No manual intervention is required.

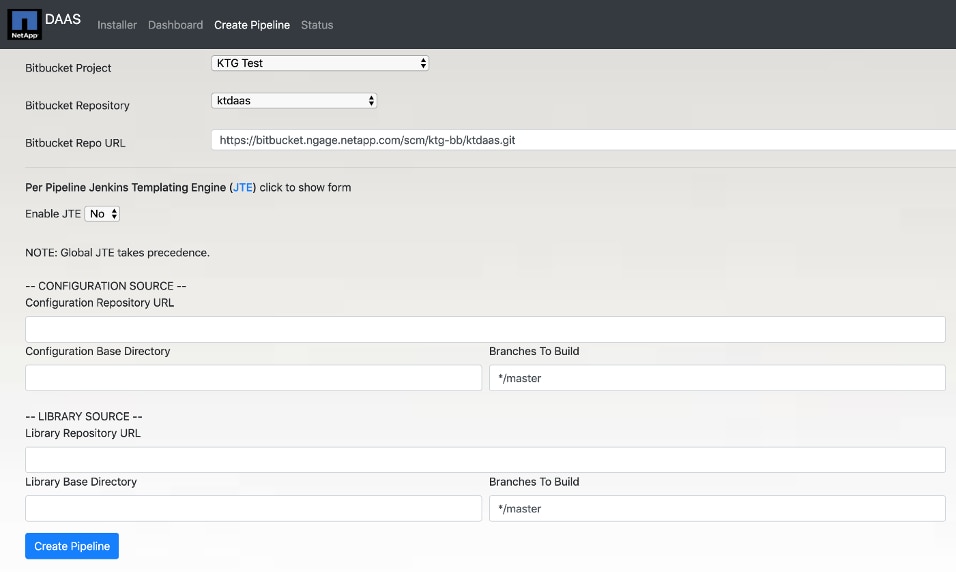

Jenkins Pipeline Creation Automation

This feature automatically creates a multibranch pipeline, pulling code from the SCM and using dynamic Jenkins agents to execute the pipeline steps.Each time a new project is created, new pipelines need to be created for each repository. By using dynamic Kubernetes pods as Jenkins agents, installing all the needed plug-ins, connecting to SCM, and creating pipelines are taken care of automatically.

Automated creation of the pipeline

The SCM service account is used to populate the list of projects and the repositories associated with the selected project. The SCM URL is auto populated based on the project and the repository selected.

The SCM service account is used to populate the list of projects and the repositories associated with the selected project. The SCM URL is auto populated based on the project and the repository selected.

This step significantly cuts down the time and manual effort required from a project admin. Once a pipeline has been created, the following things are taken care of automatically:

- Every time a new branch is created in a repository, that branch is automatically pulled in and a pipeline build for the branch is triggered.

- Every time there is a code commit in an existing branch in a repository, a pipeline build is automatically triggered for that branch.

- When a pipeline is deleted, the Jenkins folder and all of its configurations are automatically deleted, and the Kubernetes resources that were allocated for the pipeline are released.

Jenkins Templating Engine (JTE) for Pipeline Management

This framework enables projects to have templated workflows, which can be reused by multiple teams simultaneously regardless of the tools they are using. Projects can be complex, and their Jenkinsfiles can get quite long, with multiple pipelines and many lines of code. JTE simplifies and breaks down into reusable templates that are easy to understand and debug.Key features of JTE:

- Reusable Libraries

- Governance rules can be imposed on the workflows at multiple levels (configuration level, library level, and project level)

- Project-specific custom scripts

- Define branching and deployment strategy as code (advanced feature)

- No configuration is stored on Jenkins Server

Data Monitoring and Metrics

Linkerd and Splunk are used to monitor the solution. Linkerd is a service mesh for Kubernetes, and in combination with Splunk it’s used here for operational and development metrics.- Operational. Monitors the infrastructure for failures:

-

- Base metrics such as success rate, latency for deployments and pods

- Live calls over HTTP, TCP, and gRPC calls

- Debug services on the application layer

- Developmental. Monitors the pipelines, pods, and automation for failures

Summary

In conclusion, DevOps as a Service is a complete end-to-end Kubernetes-based CI/CD solution that provides centralized management of the entire software development tools ecosystem (Jenkins, SCM, and Artifactory). It enables effortless project onboarding, automating the installation, configuration, and deployment of services and saving significant time and manual effort for the development teams. By leveraging features such as JTE, the solution promotes simplification and templatization of Jenkins. The combination of Linkerd, Splunk, and Grafana monitors the infrastructure and automation, so developers can focus on development. Most importantly, DaaS provides best practices that can be reused across development teams, along with the automation and integration of tools such as Jenkins, SCM, Artifactory, security compliance, metrics, and monitoring.Sushma Sattigeri

Sushma Sattigeri is an engineering manager in DevOps Tools and Services team in the Engineering Cloud Organization at NetApp. Her team provides tools and services to the product teams to use throughout their workflows, enhancing developer productivity and user experience. Sushma manages a wide portfolio of tools in the DevOps space including Source Code Management, Defect Tracking, Project Management, Collaboration, Configuration Management, and CI/CD. In addition, her team provides extensive customer facing Ansible and Terraform automation in support of DevOps. She has written this blog on behalf of her team.