BeeGFS for Beginners: A Technical Introduction

Joe McCormick

In my previous blog post, I introduced NetApp® and BeeGFS solutions and the new NetApp support for BeeGFS. In this post, my goal is to provide a technical introduction to the core components and general concepts behind the parallel cluster file system BeeGFS. It’s difficult to predict the exact target audience, but I hope that anyone who is considering deploying BeeGFS in their environment, or who simply needs a brief introduction, will find this post valuable.

If you’re familiar with parallel cluster file systems, you can skip this paragraph. For everyone else, denoting BeeGFS as a parallel file system indicates that files are striped over multiple server nodes to maximize read/write performance and scalability of the file system. The fact that it is clustered indicates that these server nodes work together to deliver a single file system that can be simultaneously mounted and accessed by other server nodes, commonly known as clients. To avoid confusion, I won’t make any further generalizations about how parallel cluster file systems work. The main takeaway is that clients can see and consume this distributed file system similarly to a local file system such as NTFS, XFS, or ext4.

Now let’s see what the buzz is about and jump into the high-level architecture of BeeGFS.

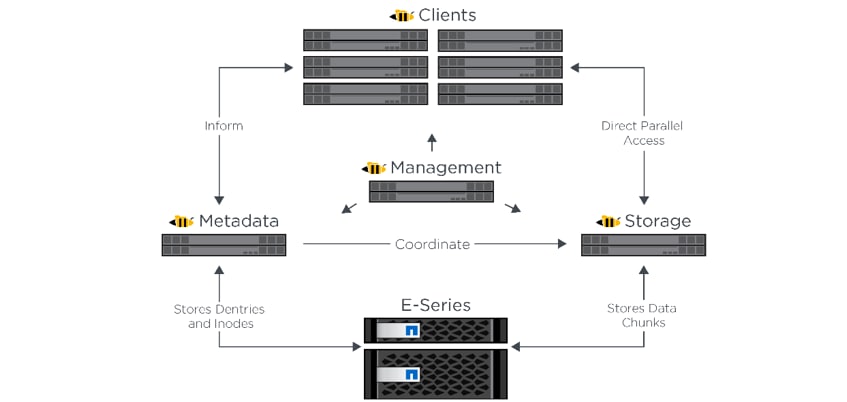

There are four main required components: the management, storage, metadata, and client services that run on a wide range of supported Linux distributions. These services communicate via any TCP/IP or RDMA-capable network, including InfiniBand (IB), Omni-Path (OPA), and RDMA over Converged Ethernet (RoCE). The BeeGFS server (management, storage, and metadata) services are user space daemons, while the client is a native kernel module (patchless). All components can be installed or updated without rebooting, and you can run any combination of services on the same node, including all of them.

Figure 1) BeeGFS solution components and relationships used with NetApp E-Series.

Figure 1) BeeGFS solution components and relationships used with NetApp E-Series.

These services are covered in greater depth later in this blog post, along with a brief introduction to a number of supporting services and utilities. We’ll also briefly touch on how to get started with BeeGFS.

Required BeeGFS Services

First up is the BeeGFS management service. This service can be thought of as a registry and watchdog for all other services involved in the file system. The only required configuration is where the service stores its data. It only stores information about other nodes in the cluster, so it consumes minimal disk space (88KB in my environment), and access to its data is not performance critical. In fact, this service is so lightweight that you’ll probably want to put it on another node, likely a metadata node. You will have only one management service for each BeeGFS deployment, and the IP address for this node will be used as part of configuring all other BeeGFS services.

Next is the BeeGFS storage service. If you’re coming from another parallel file system, you may know this as an object storage service.. Each BeeGFS storage service requires one or more storage targets, which are simply block devices with a Linux POSIX compliant file system. (I recommend NetApp E-Series volumes and XFS.) You will need one or more storage services for each BeeGFS deployment.

As the name implies, the BeeGFS storage service stores the distributed user file contents, known as data chunk files, in its storage targets. The data chunks for each user file are striped across all or a subset of storage targets in the BeeGFS filesystem. For flexibility, BeeGFS allows you to set the stripe pattern on a given directory, which controls the chunk size and number and selection of storage targets used for new files in the directory.

Third is the BeeGFS metadata service. Other parallel file systems may refer to this as the MDS. Each BeeGFS metadata service requires exactly one metadata target, which again is simply a block device with a Linux POSIX compliant filesystem. (I recommend E-Series volumes and ext4.) You will need one or more metadata services for each BeeGFS deployment.

The storage capacity requirements for BeeGFS metadata are very small, typically 0.3% to 0.5% of the total storage capacity. However, it really depends on the number of directories and file entries in the file system. As a rule of thumb, BeeGFS documentation states that 500GB of metadata capacity is good for roughly 150,000,000 files when using ext4 on the underlying metadata target.

The BeeGFS metadata service stores data describing the structure and contents of the file system. Its role includes keeping track of the directories in the file system and the file entries in each directory. The service is also responsible for storing directory and file attributes, such as ownership, size, update or create time, and the actual location of the user data file chunks on the storage targets.

Outside of file open/close operations, the metadata service is not involved in data access, which helps to avoid metadata access becoming a performance bottleneck. Another advantage is that metadata is distributed on a per-directory basis. This means that when a directory is created, BeeGFS selects a metadata server for that directory and contained files, but subdirectories can be assigned to other metadata servers, balancing metadata workload and capacity across available servers. This strategy addresses performance and scalability concerns associated with some legacy parallel cluster file systems.

Fourth is the BeeGFS client service, which registers natively with the virtual file system interface of the Linux kernel. To keep things simple, the kernel module source code is included with the BeeGFS client and compilation happens automatically. No manual intervention is required when you update the kernel or BeeGFS client service. You do need to make sure that the installed version of the kernel-devel package matches the currently installed kernel version (or much head pounding may ensue). The client also includes a user space helper daemon to handle logging and hostname resolution.

By default, clients mount the BeeGFS file system at /mnt/beegfs, although this can be changed if desired. After querying the BeeGFS management service for topology details, clients communicate directly with storage and metadata nodes to facilitate user I/O. Note that there is no direct access from clients to back-end block storage or metadata targets as there is in some environments, such as StorNext. This avoids the headache of managing a large, potentially heterogeneous multipath environment.

To maximize performance, the native BeeGFS client is recommended for all nodes that access the BeeGFS file system. The BeeGFS client provides a normal mountpoint, so applications can access the BeeGFS file system directly without needing special modification. You can also export a BeeGFS mountpoint by using NFS or Samba, or as a drop-in replacement for Hadoop’s HDFS. Client support is currently available only for Linux, although upcoming releases will provide a native client for Windows.

Supporting BeeGFS Services and Utilities

The file system can be managed by using the beegfs-ctl CLI included with the beegfs-utils package. This utility must be run from a client node and is automatically installed with the client service. Built-in help is available by appending --help to any command. This package also includes the BeeGFS file system checker (beegfs-fsck), which is used to verify and correct file system consistency.

If GUIs are your preference, the Admon service (Administration and Monitoring System) provides a Java-based interface for managing and monitoring BeeGFS. The Admon back-end service runs on any machine with network access to the other services to monitor their statuses and collect metrics for storage in a database. The Java-based client GUI runs on a workstation or jump host and connects to the remote Admon daemon via HTTP.

There is also a BeeGFS Mon service that can be used to collect statistics from the system and write them to InfluxDB (a time series database). The Mon service includes predefined Grafana panels that can be used to visualize the data; or alternative tools can be used with the InfluxDB data. If you’re just looking for graphical monitoring over management, I recommend this route over Admon.

Getting Started with BeeGFS

Typically, BeeGFS services are installed using your package manager of choice (for example, apt/yum/zypper) after adding the BeeGFS repositories. Configuration files for all BeeGFS services can be found at /etc/beegfs/beegfs-*.conf. Log files are located at /var/log/beegfs-*.log, with rotating archives kept as beegfs-*.log.old-*. BeeGFS scripts and binaries are located under /opt/beegfs/, and BeeGFS services are managed as systemd units.

I could write an entire blog post on deploying BeeGFS, but NetApp has already published a solution deployment guide. If you’re interested in further exploring performance numbers for E-Series and BeeGFS, download the reference architecture .

Although BeeGFS generally works well out of the box, exhaustive tuning and configuration options are available to maximize performance for a given workload or environment. Beginning in September 2019, NetApp also offers E-Series and BeeGFS solution support to help you get the most out of high-performance computing storage environments built on BeeGFS and E-Series.

I’d love to get your feedback on this post or to hear what you’re doing with BeeGFS in your environment. Drop me a line at joe.mccormick@netapp.com, or leave a comment below.

Joe McCormick

Joe McCormick is a software engineer at NetApp with over ten years of experience in the IT industry. With nearly seven years at NetApp, Joe's current focus is developing high-performance computing solutions around E-Series. Joe is also a big proponent of automation, believing if you've done it once, why are you doing it again.