Automate all of your data workflows

Share this page

Mike Oglesby

We all know the feeling. There’s pressure to do more with less. The future of the business depends on it, but your to-do list just keeps getting longer, and you’re bogged down with your day-to-day processes and procedures. This feeling is all too familiar for the AI engineers who are tasked with constructing the future while keeping up with their current projects and tasks. Often, these current projects and tasks involve sourcing data, moving data, managing data, accessing data, and… you get the picture. They involve a lot of data, and working with data is complicated.

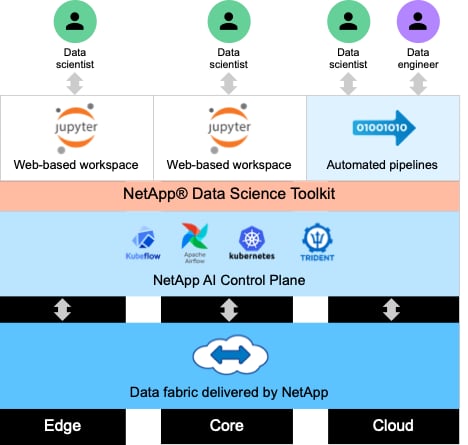

What if there were a better way? What if you could automate your data workflows in order to free yourself to work on all of those new projects that you’ve been wanting to get to? With NetApp, you can! We recently released NetApp® AI Control Plane, a refreshed reference architecture that can help you to save time. This Kubernetes-based solution stack allows you to choose between two popular open-source workflow automation tools – Kubeflow and Apache Airflow.

Kubeflow

Kubeflow is a popular open-source AI toolkit for Kubernetes. The Kubeflow project makes deployments of AI and ML workflows on Kubernetes simple, portable, and scalable. It works by abstracting away the intricacies of Kubernetes, allowing AI engineers and data scientists to focus on what they know best―AI. Kubeflow can run on any compute or cloud platform, freeing you to deploy our reference architecture wherever and whenever you like.

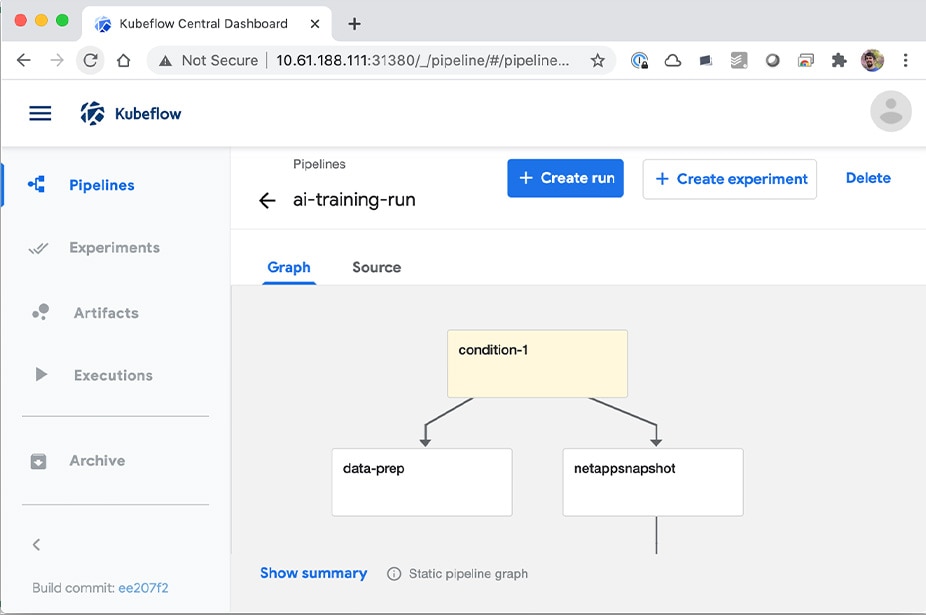

A key component of Kubeflow, Kubeflow Pipelines provides a platform and standard for defining and deploying portable and scalable AI and ML workflows. We have developed some example pipelines that demonstrate how advanced NetApp data management operations can be incorporated into automated workflows using the Kubeflow Pipelines framework. This framework allows you to automate such tasks as provisioning a new data science workspace, cloning a workspace to facilitate rapid experimentation, taking a NetApp Snapshot™ copy of a workspace for traceability or backup, and triggering the movement of data between data centers and/or clouds. Our example workflows are built around the NetApp Data Science Toolkit, so they are simple and approachable.

Apache Airflow

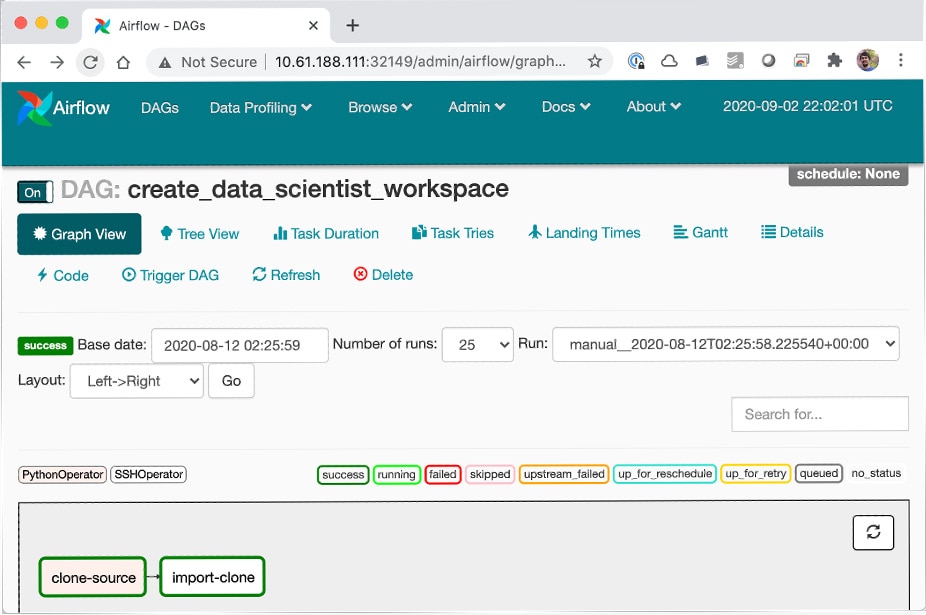

Apache Airflow is an open-source general-purpose workflow management platform that provides programmatic authoring, scheduling, and monitoring for complex enterprise workflows. Just like Kubeflow, it is compute-agnostic. You can deploy it anywhere. It is often used to automate ETL and data pipeline workflows, but it’s not limited to these types of workflows. Much like Kubeflow Pipelines, Airflow workflows are created via Python scripts, and Airflow is designed on the principle of "configuration as code.” Just as we have developed example pipelines for Kubeflow, we have also developed example workflows for the Airflow platform and the Apache ecosystem.

The best time to stop wasting time with manual tasks is right now. The best day to start automating your workflows is today. We’ve made it easy for you to automate your data management workflows with popular and widely- used open-source tooling. What are you waiting for? Check out the NetApp AI Control Plane reference architecture and start automating today. To learn more about all of NetApp’s AI solutions, visit www.netapp.com/ai.

.png?width=117&format=pjpg&disable=upscale)